What is Crowdsourcing Cultural Heritage?

Crowdsourcing is to take projects created by institutions or organizations and to outsource tasks to the general public. Wikipedia is the most well-known case of crowdsourcing. It typically involves a project generated by a museum, a library, an archive, or a gallery (GLAM). Project designers will digitize large amounts of data, build a database to house the digitized objects, and create a platform that would host collections based on these data, and that would serve as a bridge between data and audiences. Mia

The one critical component of cultural heritage institutions with digitized collections is the reliance on the public to aid with the curation of the large amounts of data contained here.

Mia Ridge, the British Library’s Digital Curator for Western Heritage Collections, and expert on building digital projects expands on the purpose of these endeavors: “…crowdsourcing projects are designed to achieve specific goal through audience participation, even if that goal is as broadly defined as ‘gather information from the public about our collections”. (Introduction) And so to create successful crowdsourcing projects, user-friendly platforms should be built to encourage audience members to discover these collections, and to help curate them with their contributions.

General Purpose and Benefits

Typical motivations for creating and participating in crowdsourcing projects in cultural heritage are to:

(1) generate greater public engagement with digitized collections

(2) create a venue for audience members to pose research questions and generate new knowledge.

(3) address the huge task of curating vast amounts of data

Sharon Leon, when discussing the benefits of having community-sourcing efforts in place at cultural heritage institutions with digital collections, describes the importance of understanding audience motivation and engagement depth, suggesting that, “Public contributions can serve as a barometer of the most interesting materials within a particular collection.” (14)

Crowdsourcing Audiences

Audience members are typically diverse in nature, ranging from scholars to the general public. So whether you are a historian, a researcher, a military history buff, a map junkie, a middle school student, a paleographer, a genealogist, or a retiree, crowdsourcing project designers are in need of contributions from multiple individuals in order to sustain such initiatives.

Crowdsourcing Tasks

There are multiple tasks involved in sustaining crowd curation, including: transforming content to new formats, producing artefacts, creating descriptive tags, annotating, and correcting errors (Ridge, Introduction: Crowdsourcing Our Cultural Heritage). Let’s take a look at four different cultural heritage projects that rely heavily on crowdsourcing: Transcribe Bentham, Building Inspector, Trove, and Papers of the War Department.

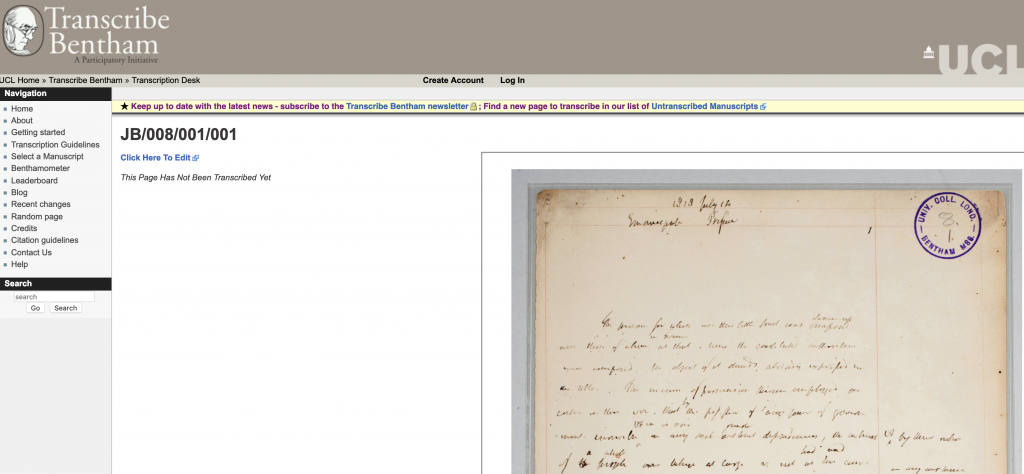

Transcribe Bentham

Transcribe Bentham is a participatory editorial project that recruits contributors to help with the transcription of digitized manuscripts (60,000 folios) written by social reformer, Jeremy Bentham. As a collaborative effort between University College London’s Bentham Project, various digital humanities institutes, and members of the public, Transcribe Bentham to increase discoverability and access to the The Collected Works edition.

Who Contributes and Why?

Online volunteers with internet connectivity (over 1,500 as of 2012) and Transcribing Bentham editors. The main goal is to engage the public with the works of philosopher and social reformer, Jeremy Bentham (1748-1832), through transcription of his manuscript papers and the creation of a searchable repository of The Collected Works edition. Participants can contribute to research on this scholar, be part of the preservation efforts of an important figure in history, learn about Bentham’s works, and gain skills in paleography.

What Tasks do They Undertake?

Users are to read Bentham’s handwriting to get a great handle of the content about to be transcribed; transcribe manuscripts in order to produce a transcription representative and diplomatic of the manuscript text; and to encode their transcripts with TEI-compliant XML and to apply tags that will help curate a more powerful and refined resource.

What Kind of Interface do They Use?

The “Transcription Desk” is the interface that hosts digitized manuscripts and the transcription tool (MediaWiki). Transcribing Bentham also makes use of TEI XML and the Keyword Spotting Tool.

How are These Validated?

After page sections are transcribed, users submit their work to the Transcribe Bentham Editors for checking over the transcribed content. Questions and comments may be included in their submissions. Editors check for accuracy of words and tag associations. Editors will send back feedback on transcriptions once they are reviewed.

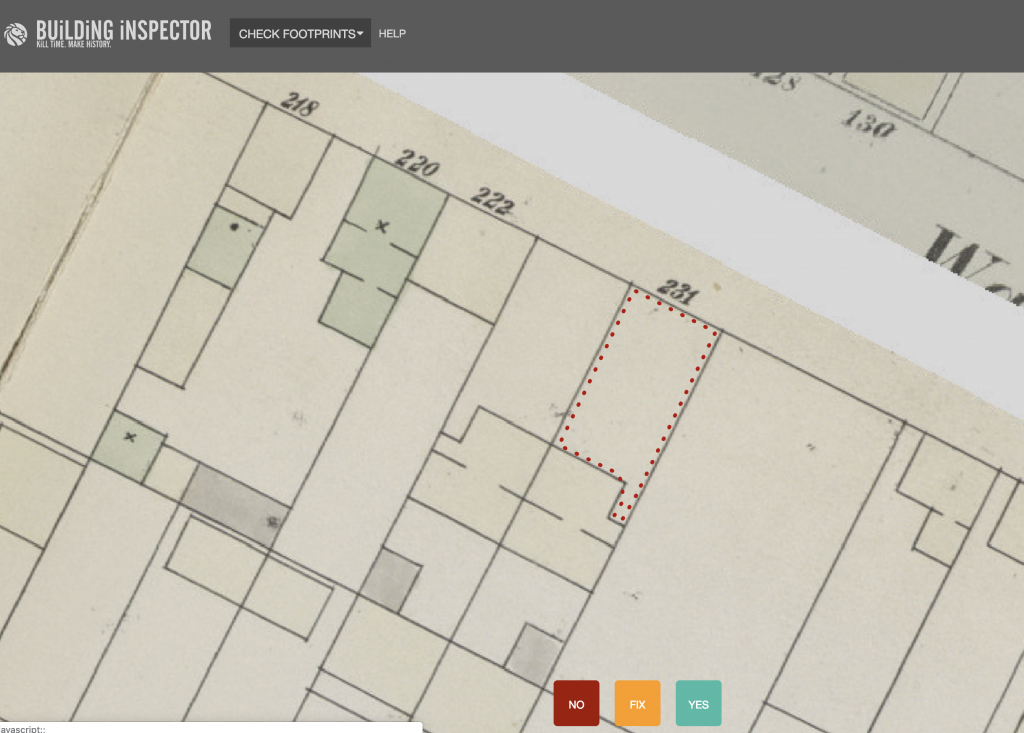

Building Inspector is…

Who Contributes and Why?

What Tasks do They Undertake?

A building inspection may take form in different ways. Contributors may check and fix footprints, enter addresses, name places or classify colors. Checking footprints will allow to create consensus on the accuracy of peer inspections. Fixing footprints will let users identify errors in footprints and correct them. Entering addresses will help locate numbers that are hard to read or addresses that have changed. Classifying colors can help Building Inspector index particular details regarding construction materials and property zone types.

What Kind of Interface do They Use?

The interface makes use of the Map Vectorizer tool, LeafletJS, Heroku, and Amazon CloudFront.

How are These Validated?

Once you submit your building inspection, the system will place it into a queue, then “seek” for consensus among fellow inspectors on your accuracy. The system tally votes with a specific frequency (every 10 minutes). Once the inspection reaches consensus (75% or more agree), the system then takes the inspection off from the queue. If fellow inspectors are still deliberating, then your inspection continues circulating until reaching consensus.

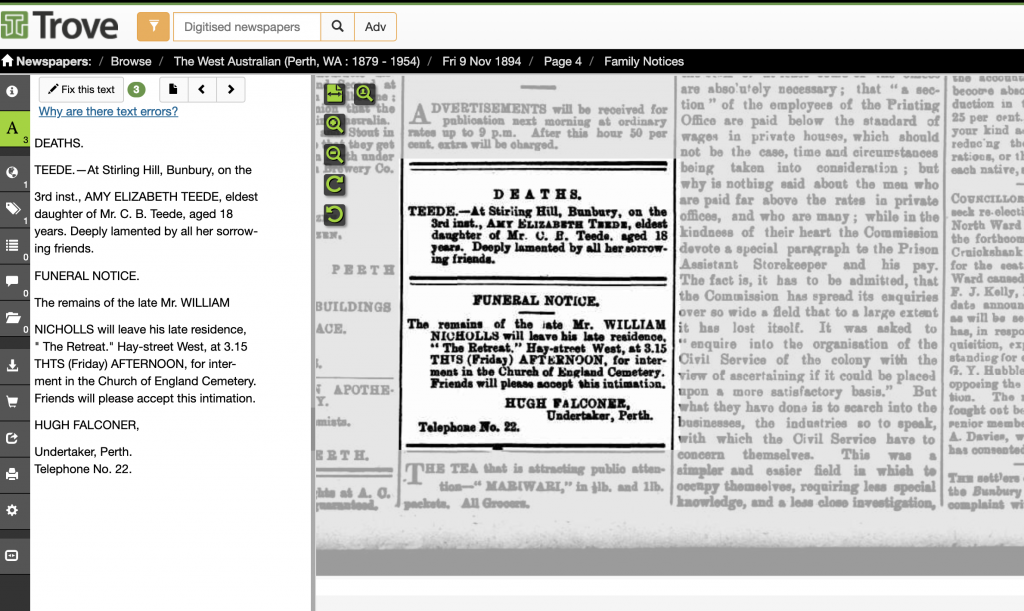

Trove

The National Library of Australia have collections of digitized newspapers that are transcribed to electronic format and made accessible on the Trove platform. Tim Sherratt, Manager of Trove, describes Trove as follows: “It’s that aggregation of the data and what that makes possible in terms of other people building new tools, new interfaces, and creating new forms of analysis to work across that material.” (https://dhcertificate.org/HIST-680/content/crowdsourcing-trove) To improve searchability and to increase accuracy of these transcribed materials (OCRd), Trove relies on online contributors to make transcription corrections.

Who Contributes and Why?

For Trove managers–interested in facilitating access to documentary heritage, in this case, digitized newspaper articles–the motivations were to find a process to improve search results, to engage users in such mechanism, and to discover elements of surprise. For contributors, this project has been driven by more intrinsic motivations, such as: engaging in personal research interests, getting involved in something “bigger then them” and with “lasting value”, participating in giving back to the community, and enjoying the correction tasks. Here’s a profile of the typical user, generated by Marie-Louise Ayres (NLA):”Together, this means that the ‘typical’ Trove user is a very well educated, highly paid, English speaking employed woman aged fifty or over, with a significant or primary interest in family or local history, who visits the Trove website very frequently.” (4)

What Tasks do They Undertake?

Some of the contributor tasks involved are: newspaper text corrections, item tagging, merging or splitting works, image uploading, and comment additions. These activities would provide aids to discovery, foment community collaboration, cultivate new research questions, and devise new forms of analysis

What Kind of Interface do They Use?

Trove API-provides access to metadata and some full text in Trove

OCR software. It also allows you to build your own applications, tools, and interfaces, among them: Serendip-o-matic, Trove Collection Profiler, Seabo, QueryPic, GlamMap, Europeana 1914-1918, and Vicfix.

How are These Validated?

There are at least three quality assurance phases in Trove. The first quality acceptance pass happens at the Library level, after the addition of metadata and the sequencing of pages. The second pass—also at the Library level—takes place after identifying missing newspaper content, removing dups, and grouping items into batches for OCR processing. OCR contractors will then receive images for processing. Any issues with the images received will be returned for reprocessing.

A third quality assurance pass will take place in the public platform. Once data is OCRd, it is then loaded into Trove to be accessed by the public. Contributors will have a chance to make any corrections to the OCR text and have those changes saved in the database for others to view.

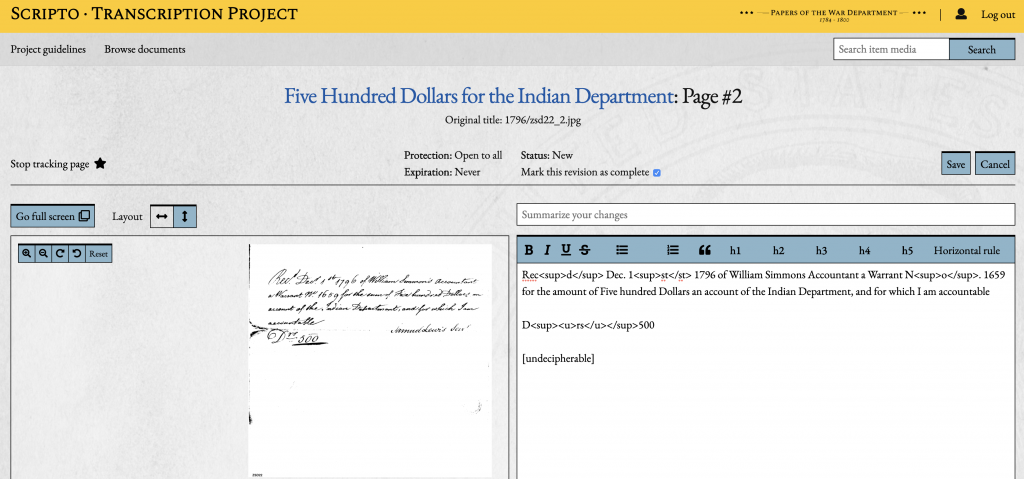

Papers of the War Department

The Papers of the War Department 1784-1800, is a digital collection comprised of over 42,000 documents on military and Indian affairs, and reconstructed from original versions that were damaged during the War Office fire of 1800. Thanks to the involvement of more than 200 repositories that contains print copies of the original damaged documents, the creation of the digital tool Scripto by the RRCHNM team and PWD editors, and members of the community who have made contributions, the cultural heritage of the Papers is made freely accessible and searchable.

Who Contributes and Why?

Scholars, researchers, genealogists and members of the public, interested in participatory archiving of the Papers of the War Department. Motivations for contributors: a genuine interest in Revolutionary War America, a sense of civic duty, personal scholarly interests, genealogy discoveries, pedagogical activities, and a curiosity about the ways in which the transcription tool process works. Motivations for the creators: to create a platform for participatory archiving, and to seek help from the public to improve searchability of documents.

What Tasks do They Undertake?

Contributors get to browse and search a document for them to work on. They would then transcribe the document, and record any marginalia or notes written on the document. They could also track pages they have not yet edited using the Watchlist.

Other tasks include:

For those documents that are hard to read (due to handwriting or document quality), contributors can discuss and decipher certain portions of the document by using the View Notes section.

Contributors could browse the revision history page to see how specific transcriptions have changed over time.

What Kind of Interface do They Use?

Papers project editors created the Scripto, an open sources transcription tool transcript submission and knowledge building. MediaWiki is used for housing and revising transcriptions, as well as for managing user accounts. They later released plugins for Omeka, Drupal, and WordPress. PHP is used for the design of display pages.

How are These Validated?

Once transcriptions are submitted by contributors, the PWD editorial team reviews them for approval. It is encouraged that contributors make use of the View Notes section to communicate any questions or clarifications with other peers and with the editorial board. Questions raised by other contributors and by the PWD editorial team improves communication and accuracy of transcriptions.

Sources:

Ridge, Mia. “Crowdsourcing Our Cultural Heritage: Introduction.” In Crowdsourcing our Cultural Heritage, edited by Mia Ridge. Ashgate, 2014.

Leon, Sharon M. “Build, Analyse and Generalise: Community Transcription of the Papers of the War Department and the Development of Scripto.” In Crowdsourcing Our Cultural Heritage, edited by Mia Ridge. UK: Ashgate, 2014

Sharrett, Tim. “Crowdsourcing: Trove” August 10, 2015. Available Via YouTube: https://youtu.be/a0xc22DrdfU